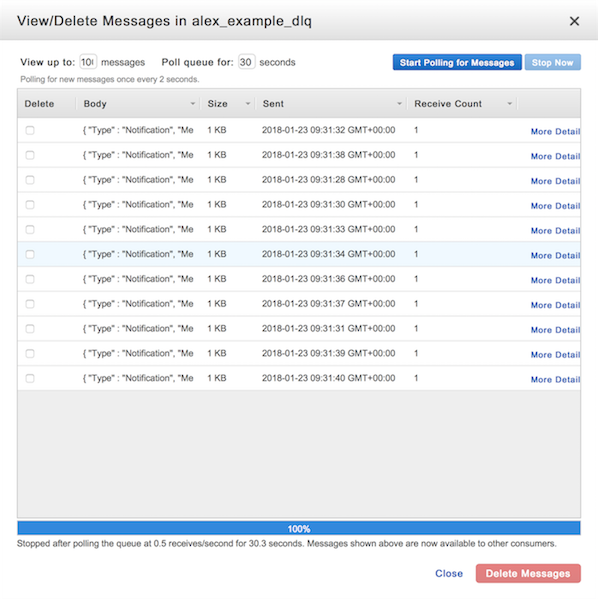

The alarm stops tasks if this sum is zero. This is the number of messages available to retrieve, the number of messages currently being processed by a service, and the number of messages that have just been deleted. This alarm uses the sum of the ApproximateNumberOfMessagesVisible, ApproximateNumberOfMessagesNotVisible and NumberOfMessagesDeleted metrics. The “scale down” alarm: if the queues are empty, stop any running tasks. The alarm adds tasks if this metric is non-zero. This alarm uses the ApproximateNumberOfMessagesVisible metric, the number of messages available to retrieve from the queue. The “scale up” alarm: if there are messages waiting on the queue, start new tasks. In particular, we have two alarms per service: We have CloudWatch alarms based on those metrics, and when they’re in the ALARM state, they adjust the task count. Another path goes queue to service (processes messages).Įach SQS queue is sending metrics to CloudWatch, telling it how many messages it has. One path goes queue to metric (sends queue metrics), metric to alarm (triggers alarm), alarm to service (updates task count). input SQS queue CloudWatch metrics CloudWatch alarms processes messages triggers alarm adjusts task count sends queue metrics ECS service A process diagram showing an SQS queue, a CloudWatch Metric and Alarm, and an ECS service. We use CloudWatch to automatically adjust the number of tasks. This introduces some latency (if the pipeline is scaled down and new work arrives, we have to wait for the pipeline to scale up), but we don’t need real-time processing so the efficiency gains are worth it. If there’s nothing to do, we don’t run any the tasks. If there’s lots of work to do, we run lots of tasks. To make our pipelines more efficient, we adjust the number of tasks in our ECS services to match the available work.

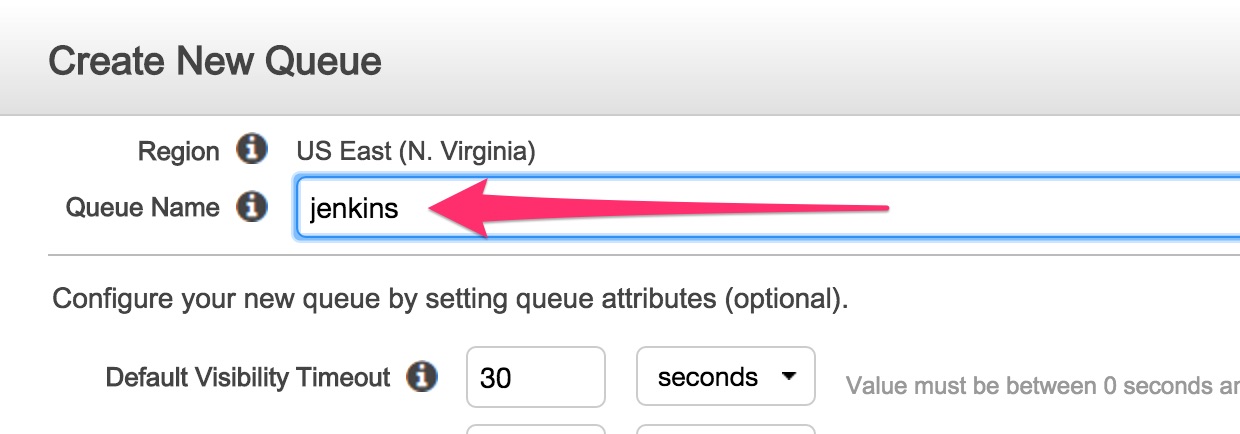

There’s plenty of work at 11am on a Tuesday, less so at midnight on a Sunday. Our pipelines are typically triggered by a person doing something, say, a librarian editing a catalogue record – so the amount of work for the pipeline have to do will vary. We use Amazon SQS for our queues, and Amazon ECS to run instances of our apps. The worker processes incoming messages from the input queue, and sends ongoing messages to the output queue. There are three components (left to right): an input queue, a worker, and an output queue. input queue process incoming message send ongoing message app output queue A process diagram showing a data pipeline. Each app receives messages from an input queue, does some processing, then sends another message to an output queue for the next app to work on. We have a set of pipelines for processing data – multiple apps connected by queues. I’m sure I’m not the only person who misunderstood the SQS/CloudWatch relationship, and in particular the implications of delayed metrics. I talked about the bug at a team meeting, because I found it quite interesting – and I’m writing it down to share it with a wider audience. The bug hasn’t recurred, so I’m fairly confident my fix has worked. Tagged with amazon-cloudwatch, amazon-sqs, awsĪ couple of weeks ago, I fixed what’s been a long-standing and mysterious bug in our apps, which was caused by a new-to-me interaction between SQS and CloudWatch metrics.This project has been forked from the original serverless-sqs-alarms-plugin and published under a different name, as the original has been abandoned.įor the complete information, please refer to the license file. Available CloudWatch metrics for Amazon SQS.In the example above, your SNS topic would receive a message when there are more than 1, 50, and 500 visible in SQS. Valid types are ignore, missing, breaching, notBreaching ( more details in the AWS docs). The treatMissingData setting can be a string which is applied to all alarms, or an array to configure alarms individually. `Ref`, `Fn::ImportValue` treatMissingData: breaching # optional, evaluationPeriods: 1 # optional, default 1 period: 60 # optional, default 60 thresholds: - 1 - 50 - value: 500 period: 300 # optional, overrides upper level config evaluationPeriods: 1 # optional, overrides upper level config treatMissingData: ignore # optional, overrides upper level config `Ref`) queue: your-sqs-queue-name topic: your-sns-topic-name # references can used, e.g. Installationįrom your target serverless project, run: $ npm install -save-dev the plugin to your serverless.yml: plugins: - ConfigurationĬonfigure alarms in serverless.yml: custom: sqsAlarms: - name: your-alarm-name # optional, unless your queue is a reference (e.g. A Serverless plugin that simplifies the setup of CloudWatch Alarms to monitor the visible messages in an SQS queue.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed